Buddhism is often described as a philosophy, a spiritual path, and a religion devoted to understanding the nature of suffering and achieving enlightenment. Since its origin in ancient India more than 2,500 years ago through the teachings of Siddhartha Gautama, Buddhism has spread across Asia and the world, developing rich traditions of rituals, ceremonies, festivals, and sacred objects. While different schools of Buddhism—such as Theravada Buddhism, Mahayana Buddhism, and Vajrayana Buddhism—have distinct practices, many rituals and celebrations share common themes of reverence, compassion, mindfulness, and gratitude.

Rituals in Buddhism are not generally viewed as acts that earn divine favour. Rather, they are tools that help practitioners cultivate wisdom, focus the mind, honour the teachings, and strengthen community bonds.

The Purpose of Buddhist Rituals

Buddhist rituals serve several important functions. They help practitioners remember the teachings of the Buddha, express devotion, create mindfulness, and mark significant moments in life and the religious calendar.

Many rituals involve:

Meditation

Chanting sacred texts

Offering flowers, incense, and candles

Bowing or prostrations

Pilgrimage

Acts of generosity and charity

Commemorative ceremonies

These activities encourage practitioners to develop virtues such as compassion, humility, gratitude, and awareness.

The symbolism behind rituals is often as important as the ritual itself. For example, flowers placed before a Buddha statue remind devotees that all things are impermanent because flowers eventually wilt and fade.

Daily Rituals

Offering Rituals

One of the most common Buddhist rituals involves making offerings before an image of the Buddha. These offerings may include:

Flowers

Incense

Candles

Water

Fruit

Food

Each offering carries symbolic meaning. Incense represents moral conduct and spiritual purification. Candles symbolize wisdom that dispels ignorance. Flowers remind practitioners of impermanence.

Offerings are not made because Buddhists believe the Buddha requires them. Rather, they represent respect and gratitude for his teachings.

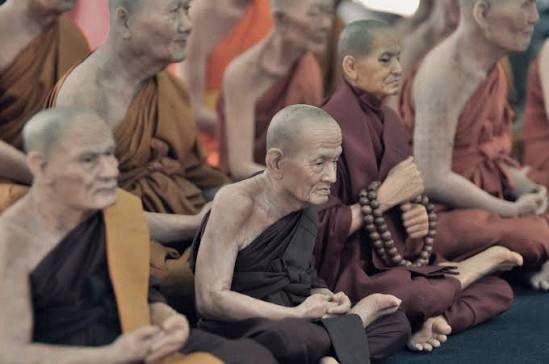

Chanting

Chanting is a central practice throughout Buddhist traditions. Sacred texts, known as sutras or suttas, are recited individually or in groups.

Common purposes include:

Developing concentration

Preserving teachings

Generating merit

Inspiring devotion

Creating a peaceful mental state

In Theravada countries such as Thailand, Sri Lanka, and Myanmar, chants are often recited in the ancient language of Pali. In Mahayana traditions, chants may be performed in Chinese, Japanese, Korean, Tibetan, or local languages.

Meditation

Meditation remains one of Buddhism’s most important ritual practices. Different forms include:

Mindfulness meditation

Loving-kindness meditation

Insight meditation

Zen sitting meditation

Visualization practices

Meditation helps practitioners understand the nature of the mind and develop wisdom and compassion.

Bowing and Prostrations

Bowing before a Buddha image is a common expression of humility and respect.

In many traditions, practitioners perform three bows representing reverence for:

The Buddha

The Dharma (teachings)

The Sangha (community)

Full-body prostrations are especially important in Tibetan Buddhism, symbolizing surrender of pride and cultivation of humility.

Life-Cycle Rituals

Birth Ceremonies

Many Buddhist communities hold ceremonies to bless newborn children. Monks may chant protective scriptures and offer blessings for health, wisdom, and happiness.

Parents often bring infants to a temple to receive their first blessing shortly after birth.

Coming-of-Age Rituals

In countries such as Thailand and Myanmar, temporary ordination as a novice monk is an important rite of passage for young males.

The experience teaches discipline, meditation, and Buddhist values.

Marriage Ceremonies

Although marriage is generally considered a social rather than religious institution in Buddhism, Buddhist weddings often include:

Blessings from monks

Chanting

Offerings

Exchange of vows

Sprinkling of holy water

The ceremony emphasizes mutual respect, kindness, and responsibility.

Funeral Rituals

Funerals are among the most significant Buddhist ceremonies.

Death is viewed as part of the cycle of birth, death, and rebirth. Funeral rituals help family members honour the deceased and reflect on impermanence.

Common practices include:

Chanting by monks

Offerings

Meditation

Merit-making activities

Memorial services

In many traditions, ceremonies continue for days or weeks after death to assist the deceased on their spiritual journey.

Major Buddhist Celebrations

Vesak (Buddha Day)

Vesak is the most important Buddhist festival worldwide.

It commemorates three events traditionally believed to have occurred on the same full moon day:

The Buddha’s birth

His enlightenment

His passing into Nirvana

During Vesak celebrations Buddhists:

Visit temples

Participate in meditation

Offer food to monks

Listen to teachings

Release birds or animals as acts of compassion

Engage in charitable activities

Temples are often decorated with lanterns, flowers, and colourful displays.

Magha Puja

Celebrated primarily in Thailand, Laos, and Cambodia, Magha Puja commemorates a gathering of 1,250 enlightened disciples who assembled spontaneously to hear the Buddha teach.

The day emphasizes:

Ethical conduct

Meditation

Respect for the monastic community

Candlelight processions around temples are a common feature.

Asalha Puja

Asalha Puja marks the Buddha’s first sermon following enlightenment.

The sermon introduced the Four Noble Truths and established the foundation of Buddhist teaching.

This festival celebrates the beginning of the Sangha and the spread of Buddhism.

Obon Festival

Obon is one of Japan’s most beloved Buddhist celebrations.

The festival honours ancestors and deceased family members. Traditional activities include:

Lantern ceremonies

Temple visits

Family gatherings

Traditional dances known as Bon Odori

Many believe ancestral spirits return to visit their families during this period.

Losar

Losar is the Tibetan Buddhist New Year celebration.

The festival includes:

Prayer ceremonies

Offerings

Family gatherings

Traditional music and dance

Temple visits

Losar symbolizes renewal and spiritual purification.

Pilgrimage Rituals

Pilgrimage plays an important role in Buddhist devotion.

Many Buddhists visit sacred sites associated with the Buddha’s life.

Important pilgrimage locations include:

Lumbini – birthplace of the Buddha

Bodh Gaya – site of enlightenment

Sarnath – location of the first sermon

Kushinagar – place of the Buddha’s passing

Pilgrims often meditate, make offerings, and walk mindfully around sacred monuments.

Ritual Instruments and Sacred Equipment

Buddhist ceremonies employ many ritual objects that symbolize spiritual truths and assist practitioners in meditation and worship.

Bells

Bells are among the most important Buddhist ritual instruments.

Their sound symbolizes:

Wisdom

Impermanence

Awakening

Temple bells announce ceremonies, mark meditation periods, and call practitioners to prayer.

In Tibetan Buddhism, bells are often paired with a vajra.

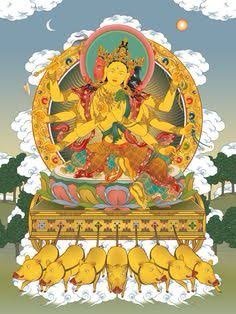

Vajra

The vajra is a ritual object especially important in Vajrayana Buddhism.

It symbolizes:

Spiritual power

Enlightenment

Indestructible truth

During ceremonies, monks may hold a vajra in one hand and a bell in the other, representing the union of wisdom and compassion.

Prayer Wheels

Prayer wheels contain sacred mantras written on paper scrolls.

Practitioners spin them while reciting prayers or meditating. Each rotation is believed to multiply the beneficial effects of the mantra.

Prayer wheels are common throughout Tibet, Nepal, Bhutan, and Himalayan Buddhist communities.

Prayer Beads (Mala)

A mala consists of beads used to count prayers, mantras, or breaths during meditation.

Most malas contain 108 beads, a number with deep spiritual significance in Buddhism.

Malas assist concentration and mindfulness.

Drums

Ceremonial drums are widely used in Tibetan and East Asian Buddhism.

Their rhythmic sound:

Marks ritual timing

Accompanies chanting

Creates spiritual atmosphere

Large temple drums can often be heard over great distances.

Cymbals

Ritual cymbals help maintain rhythm during ceremonies and chanting.

In Tibetan monasteries, cymbals contribute to elaborate ritual music intended to focus attention and create sacred space.

Conch Shell

The conch shell is one of Buddhism’s ancient sacred symbols.

When blown, it represents the spreading of the Dharma throughout the world.

Its powerful sound symbolizes the Buddha’s teachings reaching all beings.

Incense Burners

Burning incense is a nearly universal Buddhist ritual.

Incense symbolizes:

Purification

Ethical conduct

Spiritual aspiration

The rising smoke represents prayers and intentions extending into the universe.

Butter Lamps

Particularly common in Tibetan Buddhism, butter lamps symbolize the light of wisdom overcoming ignorance.

Devotees often sponsor lamps as acts of merit and remembrance.

Singing Bowls

Singing bowls produce resonant tones when struck or rubbed with a mallet.

They are used for:

Meditation

Relaxation

Ritual ceremonies

Mindfulness practices

The sustained sound encourages concentration and inner calm.

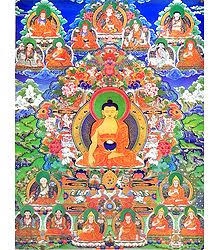

Buddha Statues

Although not worshipped as gods, Buddha images serve as important focal points for devotion and meditation.

Different postures symbolize various aspects of the Buddha’s life and teachings, including:

Meditation

Teaching

Enlightenment

Compassion

Stupas and Pagodas

Stupas and pagodas are sacred structures that often contain relics or commemorate important events.

Walking clockwise around a stupa while praying or meditating is a common devotional practice known as circumambulation.

Buddhist rituals, festivals, and sacred instruments form an essential part of Buddhist religious life. While meditation and personal spiritual development remain at the heart of Buddhist practice, rituals provide meaningful ways for individuals and communities to express devotion, preserve traditions, and deepen their understanding of the Dharma.

From the global celebration of Vesak to the quiet offering of incense before a Buddha image, Buddhist rituals serve as reminders of compassion, mindfulness, wisdom, and impermanence. Sacred instruments such as bells, prayer wheels, malas, vajras, and singing bowls enrich these practices, helping practitioners focus their minds and connect with centuries of spiritual heritage.

Across the many cultures and traditions in which Buddhism has flourished, rituals continue to provide a bridge between ancient teachings and contemporary life, ensuring that the message of the Buddha remains vibrant, relevant, and inspiring for millions of people around the world.

Tim Alderman ©️ 2026